Waiting for the Other Sheep to Drop

Should recent changes in college education make the sheepskin effect smaller? Can we check yet if they have? Let's give it a shot.

Most of what I’m known for these days is econometrics and causal inference. But where I started out (and still sometimes dabble) is in the economics of education. A subject that has held my attention that entire time is the model of costly education signaling. The basic idea is this: we see that giving people education tends to boost their income, and that this association seems to be causal. The question is why.

One explanation is that you learn things in your education that make you a more valuable / productive worker. You earn more because you learn more (this is the “human capital accumulation” explanation). Another explanation is that a good way to pick out talented workers from the crowd is to make them do something hard (like, say, being organized, smart, hard-working, and well-resourced enough to get all the way through your education). If they can do it, even if the experience didn’t change them, it lets talented people set themselves apart and plausibly identify themselves, so people who manage to make it through education get offered higher wages.1 This latter explanation is the signaling-model explanation of why people with more education earn more.

One implication of the signaling model is that we should see a sheepskin effect. Under human capital accumulation, we’d expect that you learn a little more each year, and so we’d expect that your earnings should go up a bit for each year of education you get, and we might not expect the final year to be anything special. But under signaling, what matters is being able to show off that you can do the hard thing, so we should see that the returns to the year of education where you get the degree should be higher than the other years.

And… we do see that, absolutely. Focusing on the returns to a college education, the empirical regularity that both the jump in earnings going from “just before graduation” to “graduated” is much larger than the impact of the other years of education, and this holds across many geographic and institutional contexts, and different time periods as well.2

Why do I bring this up?

Well, there’s been an interesting change in college education in the past few years. In particular, COVID marked a drastic shift in the strictness of standards and grading. It’s obvious why - are you really gonna be a stickler for turning something in on time when millions of people are dying of a mysterious disease? Fault the class when everyone does poorly on the exam? Regardless of whether or not it’s good policy, an increased tendency to graduate someone who may not have passed certain markers of success is, well, one less chance to prove that you are more capable than others of passing those markers of success. Additionally, LLMs and other recent technological developments are making it much easier to cheat your way through college. So even if I work hard to prove that I can write an essay worth an A, doing so no longer sets me apart as much from other students, some of whom might choose to get LLMs to write them an essay at least good enough to graduate.

Both of these make college less of a hard thing that some people can do and others can’t. It loses some of its distinguishing properties. The signaling model predicts, then, that the signal should become less effective.

So, back to sheepskin effects. The signaling model predicts sheepskin effects. We see real-world changes that should reduce the effectiveness of the signal. Do we see a reduction in sheepskin effects?

Granted, the sheepskin effect going up or down doesn’t perfectly map to signaling. As I try really hard to emphasize in my paper about using data to understand signaling and human capital, there are plenty of ways in which sheepskin effects can be explained by human capital accumulation rather than signaling.3 But there is some link between signaling and sheepskin, so perhaps at least the change in sheepskin effects over time will give us a little clue as to whether the thing I suspect is happening is actually happening.

I decided to do a very first-pass take on the question, specifically looking at the sheepskin effect for a Bachelor’s degree in the US. It’s a bit too soon to really see the changes, but maybe we get some nice hints or some interesting patterns. This is the kind of exploration you might likely do when thinking about a project you’d treat more seriously in the future. To be very clear, the analysis I’m doing in this post is on the rigor standards of “I made a blog post in a few days” and not “it’s a published academic study.” So take it at that level. I will try to point out caveats and weaknesses along the way.3

Data

For this analysis I gathered Current Population Survey data from IPUMS for July 2010-January 2024.4 The Current Population Survey is collected monthly, and has some basic labor market information; it’s the data set used to calculate unemployment figures in the US. The set of work variables, especially in regards to earnings, is a little limited, but conveniently for our purposes, it distinguishes between “number of years in school” and “having a degree.” The monthly nature of the data also lets me define a “year” on school-year terms, so I count “2010” as being July 2010 - June 2011.

Because I wanted to see changes in the sheepskin effect over time, I need to focus in the very-young. If I took the entire population of workers, then each new cohort of graduates would make up only a tiny fraction of the sample and we wouldn’t see anything. So I look at people aged 22-27.

I also want to focus on the group of people who have gone to a four-year college and left (with or without a degree), on a traditional-student timescale. So I limit things to non-veterans who have some college experience but aren’t currently in college, and don’t have an Associate’s Degree (2-year degree from a community college).

From there I can get employment rates for the resulting groups easily (i.e. the share who are employed, out of everyone, with not-in-the-labor-force people in the denominator).

Earnings is a bit trickier. The monthly CPS file doesn’t have individual-person earnings, just household or family earnings. So if someone goes back home to live with mom and dad, then mom and dad’s earnings would show up as your own. We don’t want that! So for the earnings analysis, I use “family income”, but only count people who are unmarried “household heads.” Ideally I’m trying to get people who are the only earner in their family. Additionally, this income is reported in bins rather than exactly; I just take the midpoint of each bin, which I then adjust using the CPI to 2024 dollars. This won’t be perfect but it’s a first pass.5

Taking a Look at the Sheepskin

Let’s start by just looking at an overall sheepskin effect. This is purely associational - I’m just going to look at employment rates and average earnings (note I take the log before averaging to handle outliers).

As you can see, the difference in employment rates and earnings from one year to the next is basically nil, up until you get a BA degree, at which point it jumps way up. Employment rates jump by 10 percentage points, and among the employed, earnings early double.

Importantly, I wouldn’t take away from these graphs that the non-graduation years of school have no impact. Selection plays an important role here, both in terms of who falls into each of these bins (among dropouts we might expect the most-able to quickly realize they’re not cutting it and drop out fast, and also note that the “<= Two years” group probably also includes some people who successfully graduated with a community college non-AA certificate, and the “4 years” group I believe from the survey coding includes people who really dragged on for 5+ years without graduating) and also in terms of where they are in their careers (among 22-27 year olds like we have here, the people who dropped out quick have had longer to find jobs). I wouldn’t attempt to estimate how large the sheepskin effect is in absolute terms from this graph, but remember I’m mostly going to focus on whether the effect grows or shrinks over time - hopefully those biases are constant over time, which will let me do this!

So is it Changing?

Let’s take a look at those same graphs broken up by year.

We definitely do see that employment and inflation-adjusted earnings have both been improving over the first years of the data, especially for the graduates. Do keep in mind that period includes us coming out of the Great Recession. During that period we see what seems to be a widening sheepskin effect.

Things are less clear in the last few years of the data. My anticipation is that we’d start to see small declines in the cohorts first graduating 2021, during COVID, with bigger drops as the post-COVID graduates make up a larger portion of the 22-27 age group. There is perhaps a hint of it, not a huge amount for employment, but something is going on with earnings.

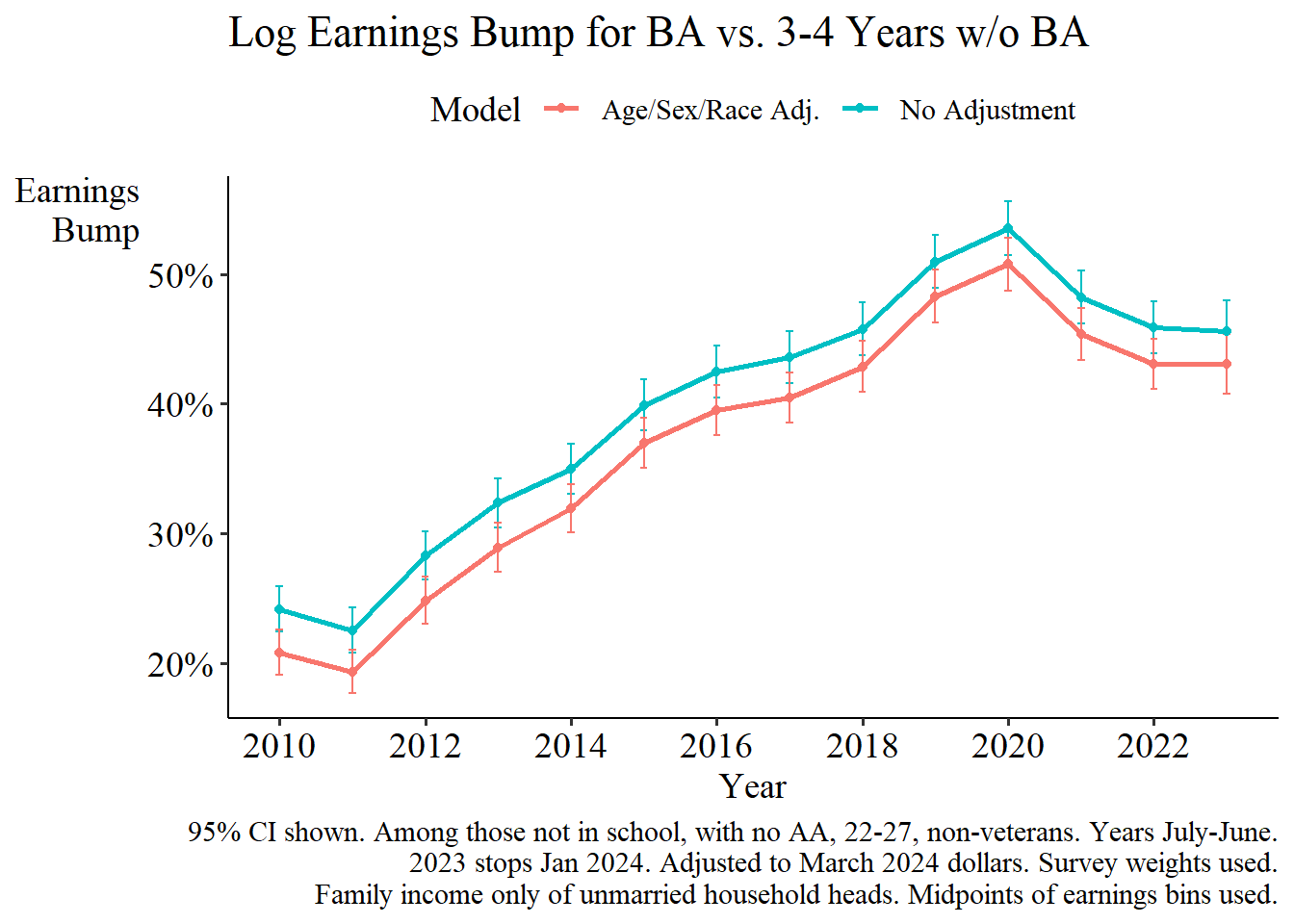

Let’s see if we can extract the effect of interest more directly. I calculate the gap between the BAs and those without a degree but with 3 or 4 years of college (dropping the <= two year group, since they are less comparable and also might include successful certificate-holders). I do this comparison twice, once looking at the raw gap, and once doing a linear-regression adjustment for age, sex, and race (white / black / American Indian or Pacific Islander / Asian / mixed and other). I use log earnings,6 and a linear probability model for unemployment.

As suspected, for both employment and earnings we see a gradual rise through the 2010s in how well college graduates were doing relative to college dropouts.7 The pattern looks basically the same whether or not you control for basic demographics.

For employment rates we see a big pandemic dip (note that “2019” extends into June 2020) where in the early pandemic, employment rates dropped by considerably more among graduates than among dropouts. But then it recovers, specifically when the very first graduates who spent an entire year in college during COVID enter the labor market in 2021! Not a howling success for my expectations. There’s a dip in 2023 but that’s a half-year ending in January 2024 anyway so it’s a smaller sample.

Earnings is different, though. Here we see a peak in 2020, followed by a gentle decline. This is more in line with what I’d expect based on my hypothesis. Note that even by the end of the data, most of the workers I’m looking at still graduated pre-COVID, even if I’m collecting data in a post-COVID period.

Conclusion

It’s still too early to tell. Isolating a sheepskin effect that speaks to how easy it is to graduate, as opposed to selection effects or labor market shifts, is hard and I certainly don’t think I’ve gotten all the way in this analysis. On top of that it’s just too soon to really say much about this. After all, we only have a few cohorts of students who graduated post-COVID, and even in a normal time, as new graduates they’d be expected to be in the most turbulent phases of their careers. More noise means less ability to hone in on a particular explanation!

Still, I do have theoretical reasons to expect the observed phenomenon I’m looking for. This is only a first pass. But you can bet I’ll be back. It may just need a few years before we have any ability to reasonably check.

There are certainly other explanations: social conditioning, networking, and so on. But these are the two that economists tend to focus on.

I won’t bother making a laundry list of cites here, but you can go on Google Scholar, type in “sheepskin effect,” and see it for yourself - these papers largely all find similar things. For a more thorough literature review on this, I recommend The Case Against Education by Bryan Caplan. I have a lot of issues with this book, including thinking it’s wrong about the inference it draws from the existence of the sheepskin effect, which is, like, the main point it’s trying to make. But it does do a very good job at accessibly laying out the evidence for the effect!

Some of these include two categories of critiques, half empirical and half theoretical. I discuss this a lot in my paper on the topic, and these explanations are one reason why I doubt the conclusions of The Case Against Education, which I mentioned in the previous footnote. I’ll discuss just a few.

The empirical critiques include: (a) it’s just unaccounted-for selection; it’s not random who graduates and who drops out, and whatever adjustments we do in calculating sheepskin effects aren’t enough, and (b) more-skilled students are better at figuring themselves out, so if they’re going to drop out they do it fast, and if they’re not they graduate fast; this process artificially inflates what the sheepskin effect even is, in a way unrelated to signaling.

The theoretical critiques include: (c) one of the types of learning you do is in learning about your own skills - the pool of dropouts includes people who learned they aren’t cut out for college, thus the sheepskin is a reflection of human capital accumulation, and (d) the degree does provide an employer-verifiable certification of your skill, like in signaling, but it’s also largely a certification of things you learned in school rather than stuff you came in with and just needed to show off, and so reflects human capital.

Steven Ruggles, Sarah Flood, Matthew Sobek, Daniel Backman, Annie Chen, Grace Cooper, Stephanie Richards, Renae Rodgers, and Megan Schouweiler. IPUMS USA: Version 15.0 [dataset]. Minneapolis, MN: IPUMS, 2024. https://doi.org/10.18128/D010.V15.0

Now that I’m to the end of the analysis and writing this up, I wonder if I should have used the CPS ASEC supplement, which is only once a year but has way more earnings information and would have avoided that problem. I originally decided not to because its March-only release date would have given me basically no data on anyone graduating after ChatGPT released. But I barely get to say anything about that anyway so maybe I should have just gone with ASEC. Ah well, another day.

Notably, this is also over a time when people suspect there was increasing levels of grade inflation in colleges. This means either (a) there wasn’t actually meaningful increases in grade inflation, (b) the grade inflation didn’t actually translate into easier graduation, (c) market forces favoring graduates were strong enough to cancel this effect out, or (d) my entire hypothesis about changes in graduation ease showing up in the sheepskin effect is wrong. To be honest my money’s on c in this case.