I Get It

As a fan of mathematics generally, I definitely understand the many, many uses of Euler’s number e. It pops up everywhere you happen to be working with growth or compounding just like π shows up every time you’ve got something circular or in a million other places. Even in the study of probability, e shows up in the probability density function of the normal distribution, in Bernoulli trials, and so on.

Since it’s so intimately tied to multiplicative growth, it makes sense that we might want to use e as the base of our logarithm. It make so much sense that we don’t blink when we call a base-e logarithm the “natural logarithm”.1

However! I am here to tell you that there’s one place where the choice of e as a logarithmic base doesn’t make a lot of sense, and that’s in the place where I (and perhaps you) personally end up using logarithms the most: regression analysis.2

What I’m going to claim in this article is that e is almost never the best pick for your logarithmic base, if what you’re doing is putting a logged variable in your regression analysis and interpreting it using a discrete change in values.3

In the best cases, it is no worse than alternatives. But often, it is materially worse than easily-available alternative bases! Using the natural log correctly requires weird calculations that are prone to error, it’s worse at approximations than other bases, and there are alternatives that don’t require weird calculations or approximations that nobody uses!

We should stop using e as our logarithmic base in regressions. Here’s why.

Logarithms in Regression

Regression is a statistical method that lets you pick a kind of shape to describe the relationship between different variables. Then, it picks the best version of that shape. Most commonly, you might say that a straight line describes the relationship between two variables. Then, regression says “straight line? OK. If we’re doing a straight line, here’s the intercept and slope of the best straight line I can give you.”

In the above data, we think a straight line describes this relationship, so we might write

where ε is an error term.

There are a few problems here that might lead us to want to include a logarithm in that model. The most obvious case is if we think (or observe in the data) that the variables’ relationship is not best described by a straight line, but instead has a proportional relationship. A straight line says “adding 1 to X adds some consistent value to Y,” but a proportional relationship says “increase X by some percentage adds some consistent value to Y,” or perhaps “adding 1 to X increases Y by some consistent percentage,” or even ” increasing X by some percentage increases Y by some consistent percentage.” A logarithm can turn a proportional relationship into a linear one, which is nice because, again, a straight line is the easiest thing for regression to handle.4 We get:

where b is the base of your logarithm (which I’ve conveniently left generic for now). This transformation changes the axes of your data until you get what you think should be a straight line.

So, if we think our main relationship is multiplicative in some way (related to percentage changes rather than linear changes), then applying a logarithm lets our good ol’ straight line describe that relationship properly, and we can also interpret the results that way.

Another reason we may apply logs in statistical analysis generally, as well as in regression, is to deal with skewed data. Perhaps we don’t care that much about having a multiplicative relationship, but we’re dealing with a variable with a few huge outsized observations, like you’re regressing something on wealth, and Jeff Bezos happens to be in your sample with thousands of times more dollars than anyone else. This tends to confuse most regression models who will pay way too much attention to Jeff. A logarithm can bring those outsized values back to Earth and give them less influence.

The Problem: Interpretation

Now we’ve got a nice straight-line description of our proportional relationship, which is good. That brings us to the downside of using logarithms: we don’t really care about the linear relationships between logarithms of X and/or Y, we care about the proportional relationships between X and Y themselves. So we have to interpret the result, i.e. turn linear changes in log(X) and/or log(Y) back into proportional changes in X and/or Y.

The first problem with this is already apparent. How do we interpret every other variable on our regression table? By looking at one-unit changes! If we just regress Y on X, then we interpret the coefficient on X as relating a one-unit change in X to a coefficient-unit change in Y. No additional calculation needed; the effect of interest is right on the table. With logarithms you have to do additional calculations, which separates our intuition about them, and how we deal with them, from everything else we’re use to with regression! This will come back to be a problem.

For now, though, let’s look at the two main ways to interpret logarithms in regression when we’re dealing with discrete changes (as opposed to taking the derivative and using infinitesimals): the exact way and the approximation.

The Exact Way

The exact approach uses the following formula (I’m using X here but it applies equally well to Y):

or

In other words, a linear increase in your logarithm of p is equivalent to multiplying your original variable by b^p (a percentage increase of b^p-1). Or, multiplying your original variable by 1+p (a percentage increase of p) is equivalent to adding log_b(1+p) to the logarithm.

The way this typically works out is something like this: when regressing Y on natural-log ln(X), if the coefficient on ln(X) is β, “a 10% increase in X is associated with a β∗ln(1+.1) ≈ β∗.0953 unit increase in Y”. Or if regressing ln(Y) on X, if the coefficient on X is β, “a one-unit increase in X is associated with a ((exp(β)-1)*100% increase in Y.”

Things are a little simpler at least if both X and Y are logged. But regardless, uh, super easy right? No problem to keep the direction of your logs and exponentials correct. Surely this additional by-hand calculation would never lead to error.5

The Approximation

You may have noticed in the previous section that the 10% increase in X led to a ln(1+.1) ≈ .0953 linear increase in ln(X). And .0953 is pretty close to .1! So the approximation method says to just round that up to .1 and say “a p% increase in X is equivalent to a (p/100) linear increase in log(X)”.

Heck, you may have even learned this approximation in your econometrics class without being told it was an approximation! The textbook I started teaching undergraduate econometrics with doesn’t mention it’s an approximation, nor does the textbook I was assigned in one of my graduate econometrics courses. But it is. And it’s not an innocuous one either! I most commonly see increases of 10% applied when interpreting logarithms in regression. At 10%, you’re almost half a percentage point off the truth! At 20% you’re almost two percentage points off. At 30% you’re nearly four percentage points off.

The approximation works fine as long as you’re working with small percentage changes, say 1%. But without a well-known cap on how high you’re allowed to go with this, and plenty of people not even aware it is an approximation, we see plenty of 10% or higher usages of the approximation. In any other case, adding a half-percentage point bias to your result for absolutely no reason would not be acceptable.

The exact method leads to error because it’s easy to do it wrong. The approximation leads to a little error just because it’s an approximation, and leads to a lot of error because the quality of the approximation breaks down quickly, and not everyone seems to know that.

Why is this e’s Fault? And Some Better Numbers

The problems I outlined in the previous section - how those additional calculations to get the exact right answer are confusing and error-prone, and how the approximation method breaks down seem like problems with logarithms in general, right? So what’s my beef with natural logarithms specifically?

Using Bases Near 1

We want to exactly interpret a linear increase of p in log_b(X) as a proportional increase in X itself of b^p, or a proportional increase of (1+p) in X as a linear increase of log_b(1+p).

Whatever we do, this whole thing will require us to do a bunch of extra calculations, take exponents, logarithms, ugh! Like, say we want to know the effect of a 10% increase in X. We have to calculate log_b(1.1)! Who knows what that is? Break out the calculator! And how do we get the confidence interval from that again?

Unless, of course, the base is b = 1.1. Then it’s just 1.

Yep, that’s right. If you can pick ahead of time the proportional increase you’re interested in (which we often do anyway; we usually set up a natural logarithm and then just interpret it with a single percentage increase), and stick that in the base, then your exact-calculation value of interest is 1. You know, 1, the typical number you’re dealing with when interpreting a regression coefficient?

The value of this is most obvious when you’re regressing Y on log(X), because then you can have a regression table that looks like the first column here:

What’s the effect of a 10% increase in X? A .148-unit increase in Y, just like it says in the table. No extra calculations, no new opportunities for error, no special tricks to get confidence intervals. Done. This is an incredibly obvious improvement.6

How about if we’re regressing log_1.1(Y) on X? Well, we can’t specify the exact percentage increase we want, but eyeballing becomes a lot easier. A one-unit increase in X translates into (coefficient) 10% increases. In the above regression table a one-unit increase is 2.343 10% increases. If we want to eyeball it here we can say that’s 23.43%. But we may as well get the exact value since it’s way easier to get than with a natural logarithm (just ask yourself - how do you calculate 2.343 10% increases in a row?). It’s 1.1^2.343 = 1.2502, or a 25.02% increase.

Even in the less-applicable case of regressing log(Y) on X, the exact approach is easier than before so we’re more likely to bother doing it, and do it right if we do. Heck, even if we stop at our eyeball approach, we’ll likely do better with our approximation this way than with a natural logarithm. If we were dealing with a true 25.02% effect while regressing ln(Y) on X, the coefficient on X would be .2233, which we’d approximate as a 22.33% increase, which is an entire percentage point more wrong than the 23.43% approximation we got with our 1.1 base.

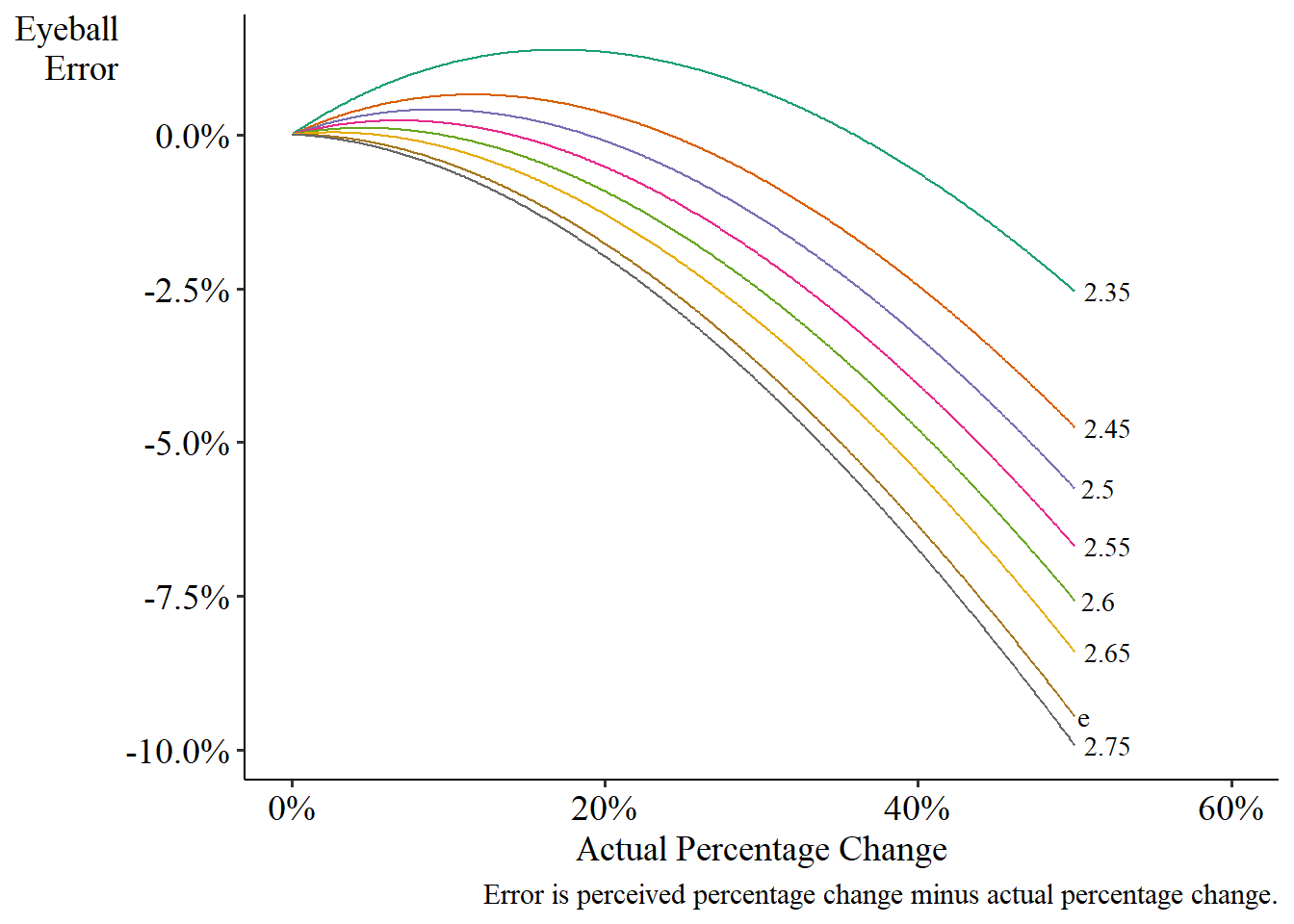

In fact, while I’m arguing that this approach makes the exact interpretation so easy that we’d always do it, if we’re really itching to eyeball, this approach makes our eyeball interpretations better generally, not just in this case. The below graph shows the extent of the error. If we do happen to pick a base of 1.1, it will beat base-e for all percentage increases except really tiny ones, where the difference is too small to care. Plus, if we are interested in a percentage increase far away from original base we picked, like up near 40% where both perform poorly (even though 1.1 is still better) we can just… redo it with a different base! Don’t care about 10% and instead want better performance around 40%? Use base 1.4. Don’t like using 1.1 because base-e wins when what you want is a 1% change? Just use base 1.01 and you’ll do even better than e! e, on the other hand, remains stubbornly a constant and can’t do that.

How about in the case where we regress log(Y) on log(X)? Well, in one sense it doesn’t matter. The result would literally be exactly the same using any logarithmic base. But there are still two benefits to a base like 1.1. First, it becomes easier to interpret any non-logged covariates in your model. Second, yeah, the results are the same, but the interpretation is way easier to remember. With base 1.1 logarithms a 10% increase in X translates into .326 of a 10% increase in Y, which is 1.1^.326 = 3.16%.7 To get there with a natural log, well, we could do that same calculation since it’s the same coefficient, but if we look up the proper equation we find the wildly unwieldy

So there we have it. Picking your percentage interest of increase, putting that in your logarithmic base, and then interpreting exactly. Way better for regressing Y on log(X), considerably better for regressing log(Y) on X, and technically the same but intuitively better for regressing log(Y) on log(X). Less work, fewer mistakes, results right smack dab on the regression table. It’s a winner.

Using Bases Near e, Then Approximating

Alright, I’ve given my spiel about bases near 1 and I think it’s a pretty convincing case. But I will admit that it is pretty weird. This isn’t just about changing the base of the logarithm, it’s using them in an entirely different way, to be honest. So the problem isn’t really e so much as it’s the way we use logarithms in regression.

Right?

Nope! e’s still a bad pick for a base if you’re doing logarithms the normal way.

Or at least it is if you’re approximating. If you’re using an exact interpretation, with your properly-arranged ln(1+p)s and exp(β)s, then it doesn’t matter what base you use. e is fine there.

But what if you’re doing the typical approximation, where a .1 change in ln(X) corresponds to roughly a 10% change in X? Turns out e isn’t a particularly good pick for a base when you do that.

Lowering the base marginally from e leads to very, very slightly worse performance for small percentage changes, but then absolute and quite large improvement afterwards. The lower you go with the base, the worse the low percentages look and the better the higher percentages look.

At the very least I should be able to convince you that a base of 2.65 is better than e, if you’re going to use the traditional approximation method. The worst 2.65 ever underperforms e by is .017 percentage points. Not bad. And after a real-size change of only 2.7%, 2.65 dominates e, and by a full percentage point by the time you get to a 50% change (although, again, neither is particularly great for large changes). Why not switch?

Pragmatically I think 2.6 is quite attractive if you’re going to use the traditional approximation. The worst underperformance relative to e is only .05 percentage points, basically nothing, and it dominates e for all percentage changes above 4.7%. Plus, it’s the best performer around the 10% mark, a common percentage increase to use. In fact, if the true percentage change is 10%, the approximation with a base of 2.6 is really very accurate, and is only off the truth by .025 percentage points.

So, deciding to stick to the traditional approach, and an approximation? I’m not sure why, given how easy an exact interpretation is, but if you are, then e’s not your friend! Try a different base. May I interest you in a 2.6?

Writing a Whole Paper Without the Letter e

I can see why we use e, or at least why we traditionally have. Sure, if you’re working with infinitesimals, and taking a derivative instead of using a discrete change, then a base-e logarithm makes sense (but are you?). Sure, if you’re building a theoretical model with some form of growth, your equations might naturally feature a base-e logarithm and you might want your regression equations to match (although really, using a different base is just rescaling the variables; surely your theory can handle that!). And from that base, it’s what we teach in class, and what we remember learning. So we do it, and the headaches it causes and mistakes we make as a result are probably small enough that we don’t even notice them.

I know. It’s weird. It’s just not what we’re used to. I recognize it’s a losing battle. No matter how convincing you may find this article, or my original published article making this same argument, and no matter how very-real the gains are, are you really going to buck the trend enough to present an entirely different way of doing logarithms and send that to a referee? Especially the bases-near-1 stuff. A superior product, I think, and easier to use, to boot! But I’m trying to sell you the Dvorak keyboard over here, and you’re probably just fine with your QWERTY.

I know it won’t happen. But a guy can dream. A guy can dream.

This post is a retread of an article of mine (published version, arXiV) that I believe, with only the tiniest bit of bitterness I promise, has gotten too little attention. Only one cite so far and it looks like they actually don’t cite it any more but just forgot to remove it from the bibliography! No takers yet on the method. Perhaps you will be the brave soldier who takes the first charge.

There is something to recommend the use of natural logarithms when you are only considering infinitesimally small changes. In other words, if you are interpreting your regression by taking the derivative, and truly working with infinitesimal changes (as one might do one calculating an elasticity), then go with base-e, since your derivative is a little cleaner that way. But this is most of the time not what we’re doing, at least not in the papers I read! Most of the time I see people trying to find out how a certain-sized change in X corresponds to a change in Y.

A common misconception is that this is done to make the data normally distributed. This is not true, first because who cares if our variables are normally distributed (it’s not an assumption of OLS, it’s really not). We assume the errors are normally distributed when doing our standard error calculations, but not the data. Second, because taking the log of a skewed variable isn’t guaranteed to make it normal. That only works if it happens to be log-normal in the first place!

Unsurprisingly, when writing my paper on this topic, I looked for cases where all the interpretations I mention in this post had been applied. I wanted examples, but also I wanted to see if people got them wrong! There are three example papers I include in the paper. All three have errors in interpreting their logs. I didn’t even have to look hard for them. These are three of the first four papers I happened to find that did these interpretative methods. The fourth one also had an error.

What if you want multiple different percentage increases? You could theoretically run the model again, which wouldn’t be too hard, and again would put all the results directly in your regression table. Alternately, it’s arguably easier to change this approach to get multiple percentage values than doing it with a natural log. Just use the change of base formula! Want a 20% change instead of a 10% change? Just multiply the coefficient by log(1.2)/log(1.1). Done.

Or did you happen to think that log-log was the easy one where a 1% increase in X is simply a β% increase in Y, i.e. the elasticity? Nope! Or rather, yes, but only for infinitesimally small changes in X. If you’re doing a discrete change in X that doesn’t work any more. But don’t feel too bad. Uh… so did I. And I’m pretty sure that’s what I learned when I took econometrics! Something to fix for the second edition (at which point that link will no longer have an error in that section).